Tech workers are burning through massive amounts of AI computing in a race for it code faster and automate more work. In Silicon Valley, the practice is known as “tokenmaxxing,” where employees push their use of ChatGPT and other large language models to the limit to maximize productivity.

The trend has accelerated alongside the growth of AI coding tools and agents that can handle increasingly complex tasks. Unlike the occasional ChatGPT or Claude query to write help, code generation and execution of agent workflows consume many more arguments, or AI model units process data when users enter requests or generate responses. Within tech startups and firms, token usage is increasingly becoming an indicator of how much employees rely on AI in their day-to-day work, with some engineers even leaving coding agents running overnight to speed up product development.

But not every enterprise AI leader believes that more usage automatically translates to better results. “I think this (tokenmaxxing) is going to be a short, short-lived cycle,” Chris Bedi, Service NowThe chief customer told the Observer at the 2026 ServiceNow Knowledge event this week. “There’s a bill to pay for those tokens.”

ServiceNow is a leading cloud platform that helps enterprises manage, automate and design workflows. Approximately 90 percent of Fortune 500 companies use its products, including Nvidia, AT&T and Delta Air Lines. In the first quarter of 2026, ServiceNow generated $3.67 billion in subscription revenue, a 19 percent year-over-year increase since making AI central to its business strategy.

One of its key products is AI Control Tower, a platform that allows customers to oversee AI deployments, including tracking agent behavior and measuring return on investment (ROI). The company also offers an “autonomous workforce” pool of “AI specialist” agents, which it has expanded to execute workflows across IT, customer relationship management, and security and risk, among other functions. It also invests heavily in improving AI through ServiceNow University, a platform designed to train workers to use AI in their jobs.

As AI agents take on larger roles in the workforce, Bedi says ServiceNow aims to help customers maximize value without excessive overhead. But in his conversations with enterprise customers, tokenmaxxing isn’t top of mind. “When I talk to the C-suite, tokenmaxxing doesn’t come up,” Bedi said.

Bedi argues that the trend combines activity with value. “It’s almost like measuring a restaurant based on the number of ingredients they buy,” he said. “You don’t measure a restaurant that way, I wouldn’t.” For example, a worker who prompts an AI chatbot dozens of times to generate code may end up with the same result as someone who gets there in just a few requests.

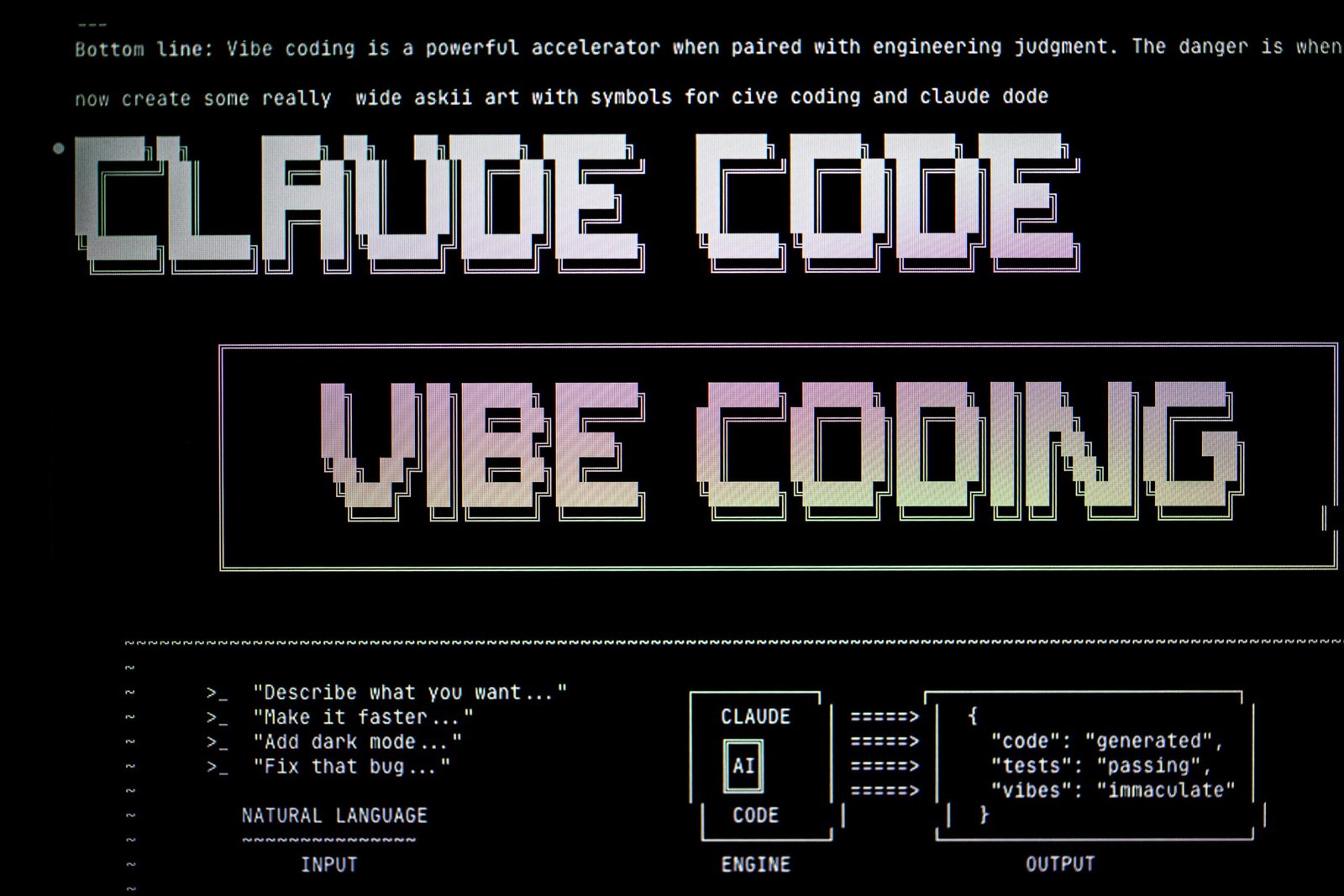

His skepticism comes as the use of AI within tech companies is exploding. According to The New York Times, employees at AI firms are consuming staggering amounts of computing domestically, with one Anthropogenic allegedly an employee racking up a $150,000 bill in a single month using the Claude code. Tokenmaxxing has become emblematic of how quickly the costs of AI experimentation can spiral as workers face pressure to augment their workflows. As employers increasingly mandate the adoption of AI at work, token usage is expected to grow even more.

This growth has been a boon for providers of AI models that charge based on token consumption. OpenAI says its ChatGPT APIs process more than 15 billion tokens per minute. Google Gemini Models now process more than 16 billion tokens per minute— a 60 percent increase year over year, according to its latest earnings report. For AI providers, enterprise adoption creates a powerful incentive structure: the more workers rely on AI, the more revenue those systems generate.

Some tech companies have encouraged employees to maximize token usage internally. To Meta, employee created an internal leaderboard tracking token usage and highlighting top users, The Information reported in April. After the project went public and sparked debate over the value of the token consumption ranking, the leaderboard was taken down.

Generous token budgets are increasingly being treated like wages for premium software or free meals. At Nvidia’s annual GTC conference in March, CEO Jensen Huang said engineers should expect annual budgets worth roughly half of their already high salaries, on top of base pay, so their output “could be boosted 10X”.

However, a growing number of executives argue that tokenmaxxing risks becoming another useless Silicon Valley metric. Yamini RanganHubSpot’s CEO recently wrote on LinkedIn that “(Max score >> max mark),” means that measurable business results matter more than usage. Andrew Lau, CEO of engineering intelligence firm Jellyfish, shared a similar view, calling tokenmaxxing a “starting point” to boost growth.

According to Bedi, the value of AI is best measured if it significantly improves performance. Companies are still relying on familiar metrics: how much time workers save, how much output they produce, and whether AI improves operational efficiency.

While less conspicuous than token calculations, traditional business outcomes remain key to quantifying AI ROI, Bedi said. “The overall goal is, how do I help my workforce be as AI-savvy as possible and how do I help them feel comfortable using it?”