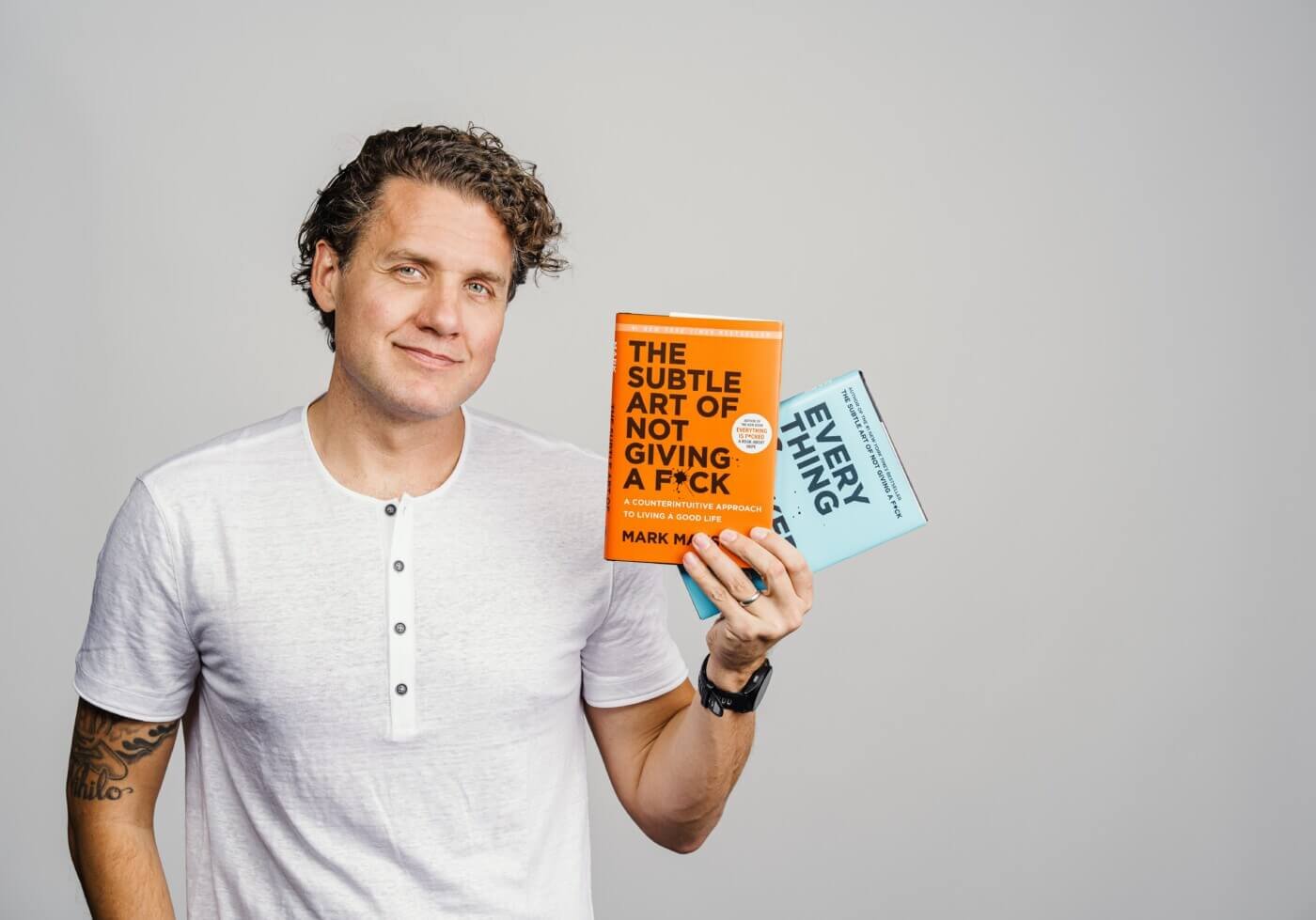

Ten years after publication The subtle art of not giving an F*ck, best-selling author and blogger Mark Manson is turning to AI to address some of its audience’s most pressing questions in life. Most recently he is a co-founder The purposean AI-powered mentor designed to give practical life advice – something Manson says most Generic chatbots, like ChatGPT, are not designed to do.

Manson is also known for Everything is wrong: A book about hope and for co-authorship WILL with an actor Will Smitha memoir that chronicles the celebrity’s struggles and personal growth. He began his career in 2008, starting a blog shortly after graduating from Boston University. What started as a dating advice column quickly evolved into a platform for deeper reflections on happiness, success and modern self-help. This blog would launch Manson’s career in publishing and, over time, earn him nearly two million followers on Instagram.

Since artificial intelligence entered the mainstream, Manson has been upbeat about its potential to improve the way people seek guidance. After exploring ways to enter the market, including the possibility of buying an existing company, he chose instead to build something new with the tech entrepreneur Raj Singhfounder of Google-Supported hospitality startup Go Moment. Singh’s company was later acqui-employed by Revinate in 2021; after leaving in 2024, he turned his focus to mental health technology. Purpose Engineering Manager, William Kearnspreviously led AI on meditation and wellness platform Headspace.

Purpose has launched a website and an iOS app, with an Android version expected later this month. So far, about 50,000 people have joined the platform, with about one in four paying for a premium subscription that costs $20 a month or $150 a year.

The Observer spoke to Manson about mental health safetywhat AI does right and wrong in the advice space and where the line lies between mentoring and therapy.

The following conversation has been edited for length and clarity.

How did you and your co-founder, Raj, connect? Who came to whom with this problem they wanted to solve?

We sat next to each other in a poker game, so it was completely coincidental. I was actually trying to buy another AI startup and ran into a snag. Raj had just exited his previous company and had already independently decided that whatever he did next, he wanted it to be in mental health and AI. I would say, a month later, in March 2025, we had a business.

How do you use AI chatbots in your life and what are your favorites?

I use AI all the time instead of Googling things or asking business questions, health questions. I was watching the movie Hamnet the other night and I stopped to have a chat with Claude about Shakespeare, and it was absolutely fascinating. Claude is definitely a favorite in terms of taste and quality of writing. Being a writer, the quality of writing is very important to me.

I’ve had a lot of fun messing around with some of the Character.AI products. It’s almost like a fan fiction. But for everyday use cases, I mostly use Claude and Gemini.

You mentioned that the Goal team cares about mental health. I have written about AI psychosis and related issues. The goal clarifies that it is not a therapist and limits access while the results “sink in”, so I see you are putting limits on communication. I’m curious about the concerns you have about AI companions being addictive or reinforcing unhealthy thought patterns and how you’ve tried to mitigate that in your app.

If you look AI psychosis casesmany of them seem to be driven by sycophancy. HE just agrees with whatever you say. It’s like, “Oh, you think you’re the Queen of England. That’s fantastic. Tell me more about that.” They are not unpleasant enough; they are not willing to challenge you, to keep you grounded in reality.

One of the first things we considered when designing Intent was that it should challenge the user. Can’t agree with everything the user says. This also fits our mission. You grow from being wrong about things. You grow by reevaluating your beliefs and questioning your assumptions. This was extremely important to us to ensure that we are actively challenging users and forcing them to reevaluate some of their preconceived notions.

Additionally, we have some pretty strict handrails. Anything that looks like it could potentially be a clinical-level situation, Purpose is designed to refer the user down a path to finding a local professional.

In fact, it’s being called a new industry standard for mental health, safety and AI Summer MHand conduct 400 simulated clinical conversations, and they judge whether the AI is safe or not. We scored 100 percent risk detection across all 400 conversations and scored in the top 0.5 percent of AI systems that are rated against that benchmark.

How skeptical are you about AI for emotional support, relationships or life advice? And how are you trying to avoid these concerns with your product?

Big AI companies woke up last year to safety precautions and negative side effects. I think AI has a ton of potential to create value for people in this space. The technology is not there yet, but it is improving.

What would it take for technology to get there?

In Purpose, we’ve modified the mission of the AI. This is not so difficult. I think anyone who has six months to develop an app can probably do something similar. What’s really hard is when you get into memory and pattern matching.

The way LLMs work is that the more information you give them, the less accurate they become, and that’s why ChatGPT’s memory, or Claude’s memory, isn’t very good, because they have so much random information about you that it’s hard to keep track of what’s useful for this conversation and what’s not.

The second part of it is prominence. Of course, if a user is talking about their mother, that’s probably a very important thing in their life, and it’s definitely more important than what they had for breakfast or what kind of car they drive, but right now, AI doesn’t know how to prioritize one fact about someone over another. You need to find ways to do this programmatically. Otherwise, the AI will fixate on a random fact for you.

I don’t think memory has really been solved by anyone, especially the big AI companies. When thinking about personal growth and life advice, memory is so important. If you have a conversation with Purpose about something that happened when you were 17, that’s probably a very important thing to remember when you come back three months later. I would say that right now the biggest obstacle is memory.

Where do you think we should draw the line for using AI in the intimate parts of our lives, and in what ways are we seeing AI companies around the world miss the mark on this front?

It is inevitable that people will use AI for personal things. If you’re stressed and staying up at one in the morning, you’re not going to call a therapist, you’re not going to call a friend on Tuesday in the middle of the night, but an AI is there. For me, the biggest thing is privacy and ensuring that user data is anonymous and respected.

While Purpose says it’s not a therapist, when I used it, it reminded me of therapy in the sense that it doesn’t tell you what to do, but asks you questions that lead you to your own decision about how to move forward in your life. How are you walking the line regarding therapy vs. simple advice?

There are two different use cases for therapy. Some people go to therapy because they are in crisis and have a major life problem. Others go to therapy for maintenance or mental hygiene. AI can do a good job with the latter use case. For example, “I had a fight with my partner. What do you think about it?” You can get a lot of mileage out of an AI in those situations, especially given accessibility, affordability, durability.

Where we draw the line is when people are in that crisis category and are showing very severe signs of distress or depression. This is where we direct them to go look for a professional. I still wouldn’t feel comfortable using AI for that use case.

I have a person in my life who, in the past, has struggled with eating disorders. They were using Purpose and when they started talking about some of the issues they were going through, not only did it correctly identify that they were probably more likely to have an eating disorder, but it sent them a directory of clinicians who specialize in those disorders in their area. I was very happy to hear it. It’s doing exactly what it’s supposed to do.

I want your version that he wrote The subtle art of not throwing a punch surprised by this venture you are doing?

I actually don’t think so. I started my first online course around 2010, and around the time the book came out in 2016, I had this dream of doing a self-help adventure course. I was disappointed that every course was on rails, like you had to start here and you had to walk in order. So many people would leave because it no longer connected with them. I actually started designing one around 2017 and got through maybe a month before it became clear that it was going to be so complicated and impractical that I abandoned it.

When ChatGPT broke out and I started messing around with it, I realized that this is the technology that makes a self-selected adventure course possible.